CEL and stats, and stats, and stats

Written by Pascal Touset on 18th March 2015

Our switch to CEL

Not long ago we switched our call activity tracking technology from Asterisk CDRs to Channel Event Logging (CEL). CEL provides better accuracy with complex calls, such as calls with queues, or calls with transfers to other phones or call groups.

If you are interested in more in-depth knowledge about the technology behind CEL, Luit has written some very interesting stuff about it. My focus is on what possibilities CEL has offered us. This article specifically addresses how our statistics module uses CEL data.

Stats

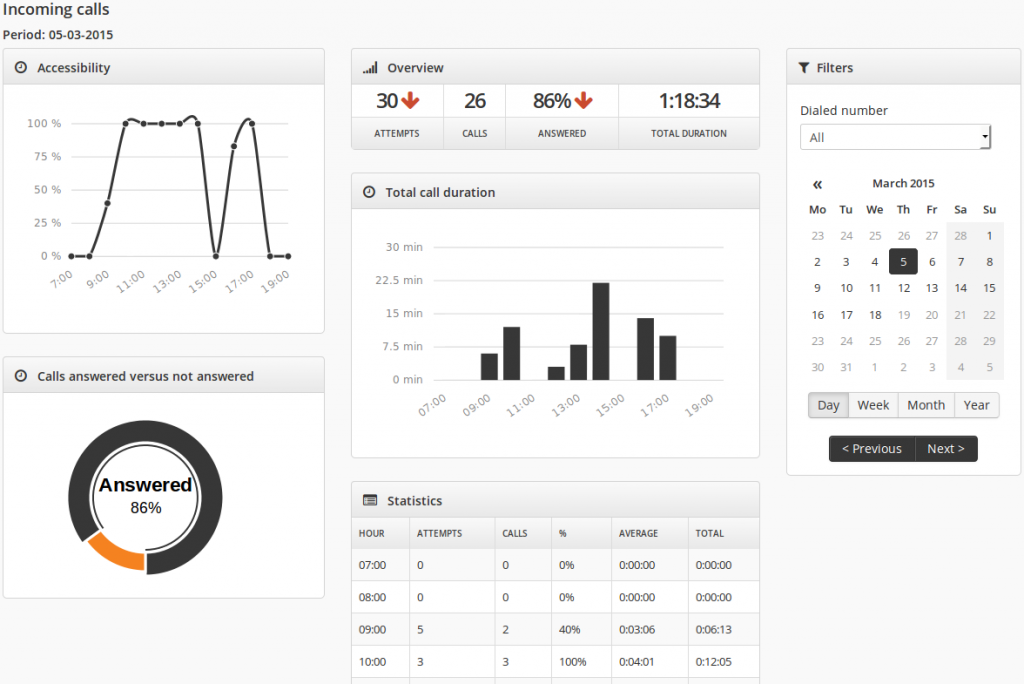

Customers on our platform can view statistics on their own calling behaviour. They can monitor how many of their incoming calls are answered, or view charts to see trends on when and how much they are on the phone. This way, a customer may find a bottleneck in his telephony and fix it.

For instance, a freelancing consultant sees he is missing a lot of calls in the evening and decides to transfer all calls to his business phone after 6pm to his cell phone. He never misses out on a customer again, his business thrives and he can take his family out for dinner every week! (And while being there, be on the phone all the time – his responsibility.)

Python-time vs query-time

The data for creating the charts above comes out of database tables containing aggregated call data. This data is created by a python cron job, which had to undergo a complete makeover to make the transition from CDRs as data source to CEL. The main theme here is performance.

The cron job is run each night. It retrieves calls from the database and then calculates stats records from this call data. On a development machine with a test database containing a few million records, this is no problem at all. On a live environment, the description ‘different’ fits best.

First and foremost it is different because of a much bigger database. A funny side note on ‘big’ – if we were still running the old CDRs, by now we would have run out of IDs. So, you start by choosing your indexes smartly. However, the biggest performance gain was in finding a good trade-off between ‘python-time’ and ‘query-time’. It comes down to this:

- You can query each call one-by-one, decide for which stats records this call needs to be used, and update those stats records. This is the easiest method to implement. Your machine needs a lot of query-time, but next to zero python-time.

- On the other hand, you can retrieve all calls at once, evaluate them to a big list and find some clever way to group them and calculate stats records with a minimum of queries. Lots of python-time, but less query-time. On my development machine, this was the fastest method.

- Thankfully we test things thoroughly, because on our live environment, both methods do not work well at all. The Goldilocks spot for our live environment is in the middle: we evaluate calls in chunks. Each call in a chunk has a call date within the same hour. Using some clever caching we calculate ‘hourly’ stats records from that chunk. At the end we use the numbers in all hourly stats records to create daily, weekly and monthly stats records.

Future stats

So now our statistics module runs smoothly and, thanks to CEL, fully accurate. But it does not end here. CEL got us brainstorming.

Tiny details of calls can be retrieved. How long has the phone been ringing? Did the call end after a conversation with another person? Or did it end in a voicemail or queue? If we are talking about averages: of all answered calls, how long does the customer on average need to pick up the phone? Of all unanswered calls, how long did it take for the caller to push the call away?

All are tools to provide the customer with information on how he can improve his availability – and, if done right, without disrupting his family quality time. We ‘only’ need to create these tools. The possibilities are endless. As long as it is fast.

Your thoughts

No comments so far